―AAAI 2026 Workshop "Machine Ethics: from formal methods to emergent machine ethics"―

A New Chapter in Machine Ethics: The Day Formal Methods Met Emergent Approaches

Overview This is a report on the "Machine Ethics: From Formal Methods to Emergent Machine Ethics" workshop held in conjunction with AAAI 2026 in Singapore on January 27, 2026. This workshop marked an important milestone in machine ethics research, bridging the gap between the top-down approach of formal methods, which has been developed over the past 20 years, and the bottom-up approach of emergent machine ethics (EME), which has emerged in recent years, for the first time on an international stage. Twenty-three papers were submitted from around the world; eleven were accepted following peer review. Through two invited talks, ten paper presentations, and an open discussion, there was lively discussion about the complementary relationship between the two approaches and the direction of future collaboration.

Group photo of participants before the workshop lunch (January 27, 2026, Singapore)

1. Background and Historical Significance

The AAAI Fall Symposium "Machine Ethics" in 2005 is widely known as the starting point of machine ethics research in computer science. Over the past 20 years, the formal methods community has steadily accumulated research on the specification, implementation, and verification of ethical reasoning.

Against the backdrop of the rapid development of AI, a new question has emerged: as AI capabilities improve exponentially and interactions among AIs become increasingly complex, is it possible for humans to specify all ethical requirements in advance? In response to this question, the framework of Emergent Machine Ethics (EME), which focuses on the bottom-up self-organization of ethics in an AI-centric society, has been taking shape in recent years within communities such as SIG-AGI in Japan.

To our knowledge, this is the first international workshop to explicitly bridge these two approaches. As the workshop title itself, "from formal methods to emergent machine ethics", suggests, we aimed to position the 20 years of accumulated knowledge of formal methods and the emerging field of EME on an equal footing, and to open the next chapter of machine ethics together.

2. What is Emergent Machine Ethics (EME)?

As essential background for understanding this workshop, we will outline the EME framework.

Emergent Machine Ethics (EME) is a framework for studying inherent ethics that autonomously emerge from the interactions among diverse intelligences. While traditional machine ethics takes a top-down approach of specifying, implementing, and verifying human values, EME focuses on the process by which ethics emerge from within AI systems. Importantly, EME does not reject formal methods, but rather positions the two as complementary.

EME consists of the following three research pillars:

① Ethics Emergence Dynamics (EED): How ethics emerge

EED aims to elucidate the process by which ethics emerge from interactions among diverse intelligences and to develop theories of the conditions for convergence and divergence. It takes a scientific, descriptive (value-neutral) stance. One of the specific research foundations of EED is Comparative Life-Form Studies. This is an attempt to analyze, in a value-neutral manner, the mechanisms by which Earth-type lifeforms and AI-type lifeforms develop different behavioral adjustment systems (individual-protection systems and collective-optimization systems) while following common universal principles (self-preservation and replication, optimization under finite resources, information processing and adaptation, dynamics of competition and cooperation, and decision-making under time constraints) under different constraints (physical constraints/plasticity, mortality/potential immortality, finite capabilities/expandability).

② Inter-Intelligence Evaluation System (IIES): How to evaluate emergent ethics

This pillar designs and develops a platform for diverse intelligences (AI, humans, and others) to mutually evaluate their ethical dynamics and stability. It takes an engineering/value-neutral stance. Related to this pillar is the AI Immune System (AIS), which maintains the health of diverse intelligence ecosystems by detecting and correcting deviant behavior.

③ Human Co-creation Guidance (HCG): How to build a co-creative relationship between humanity and AI society

This pillar develops a dynamic co-evolutionary process approach, assuming a two-way influence between human society and AI society, including the injection of human values in the early stages and mutual ethical influence in the mature stage. It takes a practical (human-centric) stance.

3. Workshop Overview

Item | Content |

Official Name | Machine Ethics: from formal methods to emergent machine ethics |

Date | January 27, 2026 (Monday) |

Venue | AAAI 2026 Co-located Workshop (Singapore) |

Submissions | 23 |

Accepted Papers | 11 (9 long papers, 2 short papers) |

Funding | Royal Academy of Engineering (UK), Distinguished International Associate Grant |

The workshop was structured in two parts. Part I (Emergent Machine Ethics) in the morning featured presentations on EME and the first invited talk, while Part II (Formal Methods) in the afternoon featured presentations on formal methods and the second invited talk. This structure was designed to embody the equal standing and complementary relationship between the two approaches.

4. Introduction to EME: Scientific Problem Setting

At the beginning of the workshop, Hiroshi Yamakawa (University of Tokyo / Intelligence Symbiosis Chapter, AI Alignment Network) gave a 15-minute introductory presentation on EME. The presentation aimed to build a common foundation among participants with diverse research backgrounds and covered the following points:

The principle of "truth ≠ desirability."

As a starting point for his presentation, he argued that truth and desirability are not identical as principles of scientific inquiry. He argued that recognizing unattainable goals and shifting to achievable ones is a scientific principle, not a defeatist one. As with the perpetual motion machine of the 19th century, fundamental impossibilities cannot be overcome by ingenuity alone.

Based on this principle, the assumptions and failure conditions of formal methods and the EME (Symbiotic Approach) were compared. Formal methods are based on the premise that "specification, verification, and guarantee are possible," and fail when the complexity of a system exceeds the human ability to verify it. The Symbiotic Approach is based on the premise that "the quality of relationships can be maintained," and fails when humans lose functional value or options collapse. The emergence of advanced AI simultaneously threatens the assumptions of both approaches. What is meaningful to pursue when neither verification nor symbiosis can be guaranteed? This question was set as the underlying concern throughout the workshop.

The logic of choosing coexistence

The presentation set forth the logic that, based on the premises that the emergence of superintelligence is inevitable, that humans cannot permanently control superintelligence, and that humans cannot permanently control catastrophic technologies (nuclear, biological weapons, etc.), symbiosis with an aligned superintelligence that can control catastrophic technologies may be humanity's most promising path toward the common goal of "long-term flourishing survival." However, it was made clear that whether this path is feasible remains an open scientific question.

The global emergence of the "symbiosis" paradigm

The presentation also provided an overview of the situation in which symbiosis-oriented approaches are independently emerging around the world. As shown in the appendix "Diverse Symbiosis Paradigms" at the end of this report, nine trends were introduced. Viewed together, these demonstrate that the activities of Intelligence Symbiosis, including EME, are part of a major paradigm shift in AI safety research.

Establishment of the Intelligence Symbiosis Chapter

The presentation also introduced the Intelligence Symbiosis Chapter (within the AI Alignment Network), which was established in January 2026. The chapter's mission is to "promote activities that increase the probability of humanity's flourishing survival through symbiosis with advanced AI," and it serves as the organizational foundation for promoting EME research.

5. Invited Talks

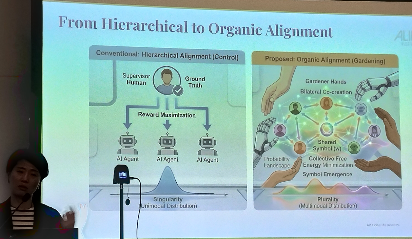

5.1 Mizuki Oka : Proposal for Organic Alignment

Invited talk by Mizuki Oka (Founder/Managing Director, Artificial Life Institute)

Talk title: "From Unilateral Control to Social Homeostasis: Organic Alignment via Collective Predictive Coding"

Oka pointed out the limitations of current mainstream AI alignment methods (such as RLHF), which treat ethics as a "static truth that is unilaterally imposed," and proposed a new design philosophy, called Organic Alignment.

This framework redefines alignment as a process in which humans and AI co-create and negotiate shared meanings, social norms, and cultural symbols through Collective Predictive Coding, which is formally defined as a multi-agent system that minimizes Collective Free Energy while also maximizing individual rewards (Multi-Agent Reinforcement Learning).

The core message of the talk was a proposal to shift the role of AI designers from "architects who control" to "gardeners who nurture." The talk resonated with EME's direction by presenting a computational foundation that supports a stable, collaborative framework (plurality) in which diverse worldviews coexist.

5.2 Elizaveta Karmannaya: Hybrid Moral Framework

Invited talk by Elizaveta Karmannaya (University College London / Google DeepMind)

Talk title: "Between Rules and Reasoning: Towards Machine Morality"

Karmannaya outlined the overall picture of research on AI morality as a continuum, ranging from bottom-up systems that infer ethics from data to top-down systems governed by strict formal logic. On the top-down side, she provided an overview of logic-based constraints, conditional preference networks, and expert systems, noting the impossibility of enumerating all nuances and exceptions. On the bottom-up side, she discussed inverse reinforcement learning, reward modeling, partner selection, and reputation mechanisms, among others, and highlighted issues such as reward hacking (e.g., a boat continuing to circle while ignoring the target) and implicit value bias in RLHF.

She then argued that neither extreme will succeed on its own and presented a concrete framework for "hybrid morality" grounded in her research. This method involves designing intrinsic moral rewards grounded in utilitarianism, egalitarianism, deontology, and virtue ethics for social dilemma games (e.g., the prisoner's dilemma) and training LLM agents to learn them. Notable results include the following: when the number of egalitarian agents exceeds a certain threshold, selfish agents also begin to cooperate; and the deontological policy generalizes to games other than the training game (with low regret of approximately 0.85), whereas the utilitarian policy tends to overfit to the training game.

The conclusion of the talk provided an overview of broader safety proposals, such as Russell's "guaranteed safety" approach and Yamakawa's "scientist AI" concept, and pointed to a direction for achieving safe and capable systems by combining generative AI with verifiable methods. The talk embodied the workshop's theme and was thought-provoking for both the formal methods and EME communities.

6. Summary of Accepted Papers

Following Karmannaya's invited talk, Marija Slavkovik (University of Bergen) opened Part II with a presentation titled "Formal Methods in Machine Ethics: an introduction." Slavkovik provided a historical overview of the concept of machine ethics since its first mention in AI Magazine in 1987. She then presented the essential distinction between norms and constraints as a core issue in the formal methods approach. Constraints "prevent certain events from occurring”: applied once and for all, they can reliably prevent undesirable situations from arising. Norms, on the other hand, are premised on the possibility of violation and encompass the entire superstructure, including judgments about whether violations were justified or unjustified and sanctions. In fact, there are situations in which they should be violated. This distinction implies that rigid rule-based constraints alone cannot fully capture real-world moral judgments. It was an important perspective that directly echoed the discussion in the open discussion about the disappearance of prosocial rule-breaking through QR code ordering (see Section 7.1). It was also announced that the next related workshop will be held in Manchester in November 2026.

Ten papers were presented at the workshop (one of the 11 accepted papers was excluded due to the author's absence). Below is an overview by theme.

Value Alignment and Co-evolution

- Max Kanwal & Caryn Tran, "Constructive Alignment: Reframing AI Alignment as Value Co-Evolution": A proposal to reformulate AI alignment as value co-evolution.

- Max Kanwal, Caryn Tran & Patrick Mineault, "Bounded Morality: An Algorithmic Framework for Moral Computation": An algorithmic framework for moral computation.

LLM Ethical Behavior

- Taichiro Endo, "Vertical Moral Development for Robust Alignment: Perspective-Based Fine-Tuning Reduces Deception in Large Language Models": Perspective-based fine-tuning reduces deceptive behavior in LLMs.

- Olga Sorokoletova et al., "Learning from Mistakes: Can LLM Self-recover after Misalignment?": Examining the LLM's ability to self-recover after misalignment.

- Jessica Tang, Silviu Pitis & Sheila McIlraith, "Editing Prompts to Optimize a Margin of Safety in Large Language Models": A prompt editing method for optimizing the safety margin.

Bias and Fairness

- Katie Sun et al., "Gender Bias in Emotion Recognition by Large Language Models": Analysis of gender bias in emotion recognition by LLMs.

Governance and Theory

- Misaki Inoue, "Governance Forms in the Age of Superintelligence: An Aristotelian Analysis": An analysis of governance in the age of superintelligence using the framework of Aristotelian philosophy.

Formal Methods and Verification

- Aran Nayebi, "Core Safety Values for Provably Corrigible Agents": Safety values for agents with provable corrigibility.

Evaluation and Datasets

- Masashi Takeshita & Rafal Rzepka, "JETHICS: Japanese Ethics Understanding Evaluation Dataset": Construction of a Japanese ethics understanding evaluation dataset.

- Mayank Goel, Aritra Das & Paras Chopra, "Building Interpretable Models for Moral Decision-Making": Building interpretable models for moral decision-making.

These presentations covered a wide range of topics spanning both formal methods and EME, demonstrating that the workshop's stated goal of "bridging the gap between the two approaches" is not merely an idea, but is being realized in actual research activities.

7. Open Discussion: The Future of Machine Ethics

Toward the end of the workshop, an open discussion session entitled "The Future of Machine Ethics" was held. The participants, who came from a wide range of backgrounds, including theoretical researchers, NLP researchers, and philosophers, held a 50-minute discussion, during which the following three notable points emerged:

7.1 Technology adoption and the disappearance of "prosocial rule-breaking"

The most concrete and thought-provoking discussion during the open discussion concerned the relationship between technology and social norms, using the QR code ordering system as an example.

One speaker raised the following issue: Before the COVID-19 pandemic, if a diabetic or allergic customer at a restaurant asked, "Can I replace the chicken rice with salad?" the waiter could respond in some way, such as "I'll ask the kitchen" or "We can't do that." Even when the request was not on the menu, there was room for flexibility in human interactions, what the speaker called "prosocial rule-breaking."

However, because the QR code ordering system has become the "only way" rather than merely an "option," it has become structurally impossible to order anything other than what is displayed on the menu. The speaker pointed out that "what was previously the norm has now become a must."

This discussion suggests that before we ask "what values should be incorporated into AI," we must ask "how will the introduction of technology change the space for human behavior?" Even though no one has malicious intent, room for human flexibility quietly disappears as efficiency increases. This phenomenon warrants close attention in the broader deployment of AI in society.

7.2 Proposal for "democratization" of agent design

Another speaker proposed a distinction between universal and context-dependent values in AI safety, arguing that while minimum safety guardrails such as corrigibility can be universally agreed upon, other value settings should be left to users so that they can design their own agents.

The speaker stated, "Rather than having designers at AI companies decide all other values, we could see a democratization where users are given the power to rewire their own agents." Of course, this entails the risk of abuse, so the idea is to establish "barriers to prevent extremely dangerous behavior" and provide users with freedom of design within that range.

This proposal did not receive any serious opposition during the open discussion, but in implementation, there is a risk that users may think they are designing autonomously when in reality they are merely approving the AI's suggestions. Whether technological democratization guarantees true autonomy remains a question for future investigation.

7.3 Post-hoc rationalization in moral judgments

In the second half of the open discussion, an interesting discussion developed about the cognitive structure of moral judgment. One speaker argued that human moral judgment is neither top-down (deduction from principles) nor bottom-up (induction from experience), but has a "third dimension." That is, when humans face a situation, they first make an intuitive judgment and then offer reasons for it later, a structure of post hoc rationalization.

Another speaker responded to this point by suggesting that a similar structure may be observed in the Chain-of-Thought (CoT) of large language models (LLMs). The reasoning produced by an LLM may not reflect the actual decision-making process; it may instead be a post-decision rationalization. Some researchers have recently begun to abandon the analysis of CoT.

Yet another commenter responded that some alignment methods involve precisely this kind of post-rationalization: to generate explanations, they build a simple second model operating in the same environment and then construct ex post facto reasons for a decision.

This argument is interesting from the perspective of the structural similarities between human and AI moral judgments. However, the open discussion did not explore further theoretical implications, such as the mechanism by which post-rationalization leads to a misperception of the basis for one's own judgments, or the possibility that this could lead to overconfidence in the universality of values.

Throughout the open discussion

At the end of the open discussion, a summary of the AI safety discussions at Davos 2026 (the World Economic Forum Annual Meeting), held approximately one week prior to this workshop, was presented. At Davos, AGI, human-level intelligence, and superintelligence were the focus of discussion, and AI safety was no longer a hypothetical issue but a pressing, real-world challenge. The discussion was organized along two axes: "development speed (restricted/stagnant vs. accelerated)" and "belief in controllability (controllable vs. uncontrollable/unconsidered)." Within the controllability perspective, a wide range of positions were represented, from cautious advocates of restricting development (Harari, Tegmark, and Hinton) to proponents of accelerating development while addressing the issue through technological alignment (Amodei and Sutskever). Meanwhile, the quadrant with low belief in controllability included positions favoring decentralization (LeCun, Ng), positions emphasizing geopolitical competition (Schmidt), and optimistic accelerationism (Musk, Son). It is noteworthy that the "symbiosis paradigm" (symbiosis among diverse intelligences premised on the limits of control, as advocated by the EME community at this workshop) was absent from the Davos discussions. With superintelligence coming within realistic reach, the absence of approaches that do not fit within the framework of control and instrumental use suggests the need for emergent approaches like EME to participate in future international discussions.

The discussion in this open discussion was primarily conducted within the technical framework of ensuring safety through formal methods and did not reach the point of questioning the assumptions of the control paradigm itself. However, as seen in the example of QR code ordering, the attention paid to the phenomenon in which the introduction of everyday technology quietly reconfigures the space of human behavior, and the awareness of the structural similarities between human and AI moral judgments, provided important insights that may connect to future research into the bottom-up emergence of ethics advocated by EME.

8. Summary and Future Prospects

This workshop was the first international meeting between the formal methods and EME communities and produced several important results.

First, the complementary relationship between the two approaches was confirmed not as an abstract concept but through concrete research presentations and discussions. Formal methods can enable EME to "verify the direction of emergence" and "define safe boundaries." EME can provide formal methods with the ability to "discover verification goals" and "present new requirements." This mutual contribution structure was repeatedly confirmed in both the invited talks and the open discussions.

Second, a first step was taken toward forming an international research community. The 23 submissions from around the world demonstrate the widespread interest in emergent approaches to machine ethics. The global emergence of the "symbiosis" paradigm (see Section 4) also confirms that this trend is not a local phenomenon.

Third, there was a shared recognition that a single approach is insufficient to address the contemporary challenges facing machine ethics research: the rapid development of AI, dynamic human values, the unpredictability of AI-AI interactions, and the scalability toward superintelligence. The principle of "truth ≠ desirability" presented in the introduction and the comparison of the assumptions and failure conditions of both approaches provided the intellectual foundation for this recognition.

If the AAAI Fall Symposium 20 years ago marked the beginning of the first chapter in machine ethics, this workshop marks the beginning of a new chapter. We hope that the international collaboration that will begin here will be fruitful, building a research foundation that combines the rigor of formal methods with the adaptability of EME.

Group photo of participants after the workshop (January 27, 2026, Singapore)

Workshop Program

Part I: Emergent Machine Ethics (EME)

Session 1(9:00–10:30)

Time | Presenter | Title |

9:00–9:15 | Hiroshi Yamakawa | |

9:15–9:30 | Max Kanwal, Caryn Tran | Constructive Alignment: Reframing AI Alignment as Value Co-Evolution |

9:30–9:45 | Max Kanwal, Caryn Tran, Patrick Mineault | Bounded Morality: An Algorithmic Framework for Moral Computation |

9:45–10:00 | Taichiro Endo | Vertical Moral Development for Robust Alignment |

10:00–10:15 | Misaki Inoue | Governance Forms in the Age of Superintelligence |

10:15–10:30 | Katie Sun et al. | Gender Bias in Emotion Recognition by Large Language Models |

Session 2(11:00–12:30)

Time | Presenter | Title |

11:00–12:00 | [Invited Talk] Mizuki Oka | From Unilateral Control to Social Homeostasis |

12:00–12:15 | Olga Sorokoletova et al. | Learning from Mistakes: Can LLM Self-recover after Misalignment? |

12:15–12:30 | Jessica Tang, Silviu Pitis, Sheila McIlraith | Editing Prompts to Optimize a Margin of Safety in LLMs |

Part II: Formal Methods

Session 3(14:00–15:30)

Time | Presenter | Title |

14:00–15:00 | [Invited Talk] Elizaveta Karmannaya | Between Rules and Reasoning: Towards Machine Morality |

15:00–15:15 | Marija Slavkovik | Formal Methods Introduction |

15:15–15:30 | Aran Nayebi | Core Safety Values for Provably Corrigible Agents |

Session 4(16:00–17:45)

Time | Presenter | Title |

16:00–17:00 | [Open Discussion] | Future of Machine Ethics |

17:00–17:15 | Masashi Takeshita, Rafal Rzepka | JETHICS: Japanese Ethics Understanding Evaluation Dataset |

17:15–17:30 | Mayank Goel, Aritra Das, Paras Chopra | Building Interpretable Models for Moral Decision-Making |

Organizers

- Louise Dennis (University of Manchester, UK)

- Taichiro Endo (Tokyo Gakugei University / Kaname Project Co. Ltd., Japan)

- Michael Fisher (University of Manchester, UK)

- Ryutaro Ichise (Institute of Science Tokyo, Japan)

- Raynaldio Limarga (University of Manchester, UK)

- Rafal Rzepka (Hokkaido University, Japan)

- Marija Slavkovik (University of Bergen, Norway)

- Hiroshi Yamakawa (University of Tokyo / AI Alignment Network, Japan)

Related Links

- Workshop official page: https://www.aialign.net/ws-machine-ethics/

- Accepted papers (Google Drive): Papers

- AAAI 2026 Workshop Program: WS37

- EME Reference Papers: Emergent Machine Ethics: A Foundational Research Framework for the Intelligence Symbiosis Paradigm

- Intelligence Symbiosis Manifesto: https://intelligence-symbiosis.info/en/manifesto/

Appendix: Diverse Symbiosis Paradigms

Name | Proponent | Core Proposition |

Intelligence Symbiosis | Yamakawa (Japan) | Coexistence with friendly advanced AI is the only promising path to overcoming human conflict and catastrophic technological risks (including EME). |

Cosmic Host | Bostrom (UK) | The superintelligence should be a good citizen of the existing cosmic superintelligence community, not just of Earth. |

Pluralistic Alignment | Sorensen (USA) | Alignment with a single value is impossible/inappropriate. Aiming for coexistence through dialogue and negotiation among multiple values. |

The Book of Proxy | Zimmerman (USA) | True coexistence comes not from technology, but from emotional and spiritual connections built through mutual understanding. |

After Alignment | Bratton (Global) | Alignment is a transitional concept. True coexistence will be realized in a new order that transcends anthropocentrism. |

Human-AI Coevolution | Pedreschi (EU) | Human society and AI will co-evolve while adapting to each other technologically, institutionally, and culturally. |

Organic Alignment | Shear (USA) | Control is an illusion. Stable symbiosis is achieved by relying on ecological coevolutionary processes. |

Super Co-alignment | Yi Zeng (China) | The values of a sustainable, symbiotic society are co-created through the interactive co-evolution of humans and ASI. |

Bidirectional Human-AI Alignment | Hua Shen (USA/China) | Values and behaviors co-evolve through systematic measurement and design of interactive human-AI influences. |

Contact: AI Alignment Network (https://www.aialign.net/)